Blog

The Oldest AI Blog in the Czech Republic

We have been writing about artificial intelligence since 2017. 1000+ articles, thousands of pages of thoughts, experiments, and reflections. No sensationalism, no ads.

Filter articles▼

Tag Filter: GPT-2 × cancel

▸Browse by Topics

Topics

Showing 13 of 13 articles

Archive 2020

Archive 2020An Interesting Article about GPT-3

Read an interesting article about GPT-3, one of the first to be published here!

Read Archive 2020

Archive 2020Have You Heard the Songs Sung by GPT-2?

OpenAI has introduced a new generative model that can create genre-specific music complete with lyrics! PS: It takes about 3 hours on a V100 GPU to generate 20 seconds of music...

Read Archive 2020

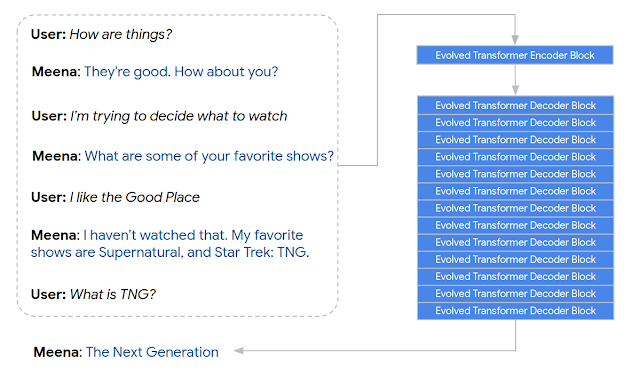

Archive 2020Have you heard about the conversational agent, or chatbot, named Meena?

At the end of January, Google AI introduced it. It is a robust model with 2.6 billion parameters. The model's architecture is based on 13 decoding...

Read Archive 2019

Archive 2019Great news! OpenAI has finally released its largest XLGPT-2 model with 1.5 billion parameters and 48 layers.

I like that people find the outputs of GPT-2 to be very convincing. The 'credibility score' for the largest model is 6.91 out of 10. That's just slightly…

Read Archive 2019

Archive 2019The Launch of 'Digital Philosophy' and Its First Tasks

The first session of 'Digital Philosophy' is behind us, and with it came a voluntary task: to take a look at Descartes' Meditations on First Philosophy. I took it upon myself…

Read Archive 2019

Archive 2019How Does the AI Salesforce Text Generation Model Work?

Just under a month ago, the AI Salesforce team released a new text generation model called CTRL. The algorithm works by allowing you to input a web link and…

Read Archive 2019

Archive 2019The Latest Artificial Intelligence Model for Natural Language Processing – ALBERT!

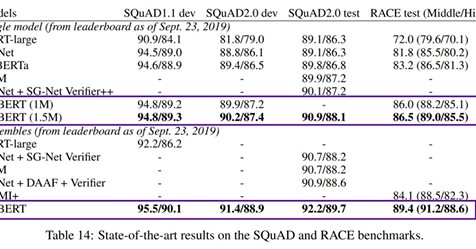

Among the top artificial intelligence models for natural language processing (Bert, Robert, GPT-2, or Megatron), a new player ALBERT joined the ranks last week! ALBERT is brought to us by…

Read Archive 2019

Archive 2019Ladies and gentlemen, this week we are witnessing one groundbreaking step after another

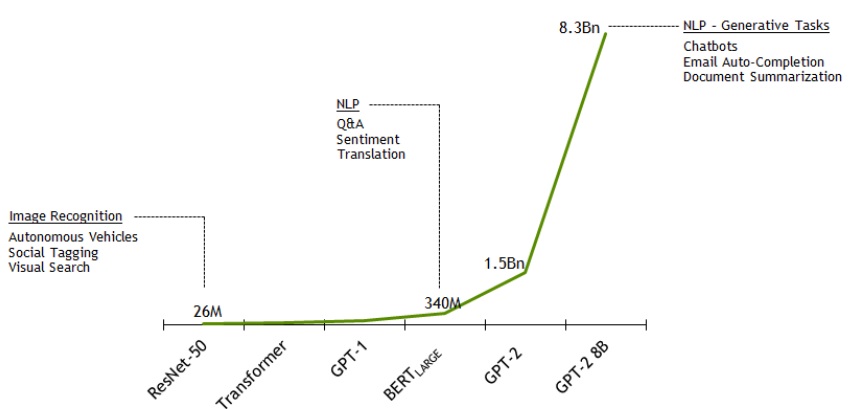

First, Nvidia releases the colossal Megatron (the largest model in the world, five times bigger than the largest GPT-2), to which OpenAI responds by releasing its large model with...

Read Archive 2019

Archive 2019Nvidia Announces It Has Trained the Largest Language Model in the World, GPT-2 8B!

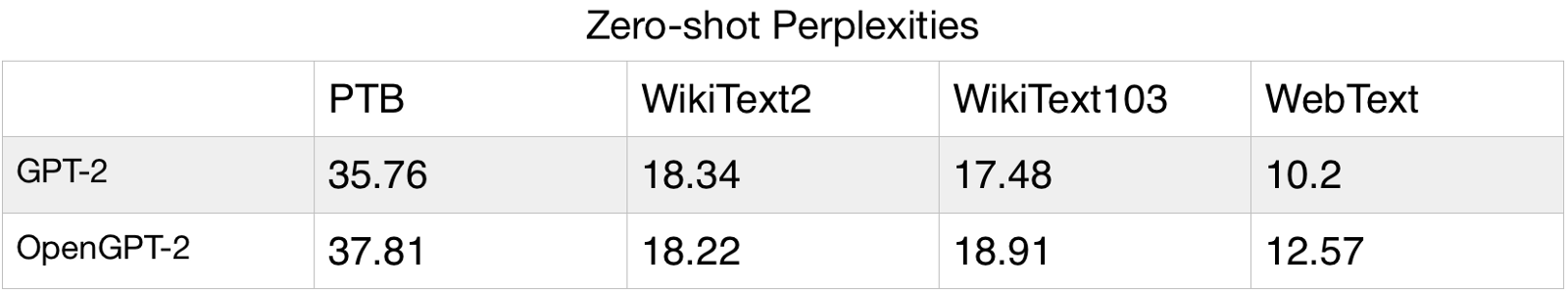

The model uses 8.3 billion parameters and is 24 times larger than BERT and 5 times larger than the previously largest GPT-2 from OpenAI. Nvidia employed parallelism that…

Read Archive 2019

Archive 2019Microsoft invests a billion dollars in OpenAI!

We write about OpenAI quite often. Not only were we amazed by their GPT-2 model, but we also noted how the famous Elon Musk helped establish it back in 2015, although he recently departed from the company.

Read Archive 2019

Archive 2019Open AI Comes with Another Interesting Project – MuseNet!

In this experiment with a deep neural network (using the same universal technology as GPT-2), instead of text, it attempts to predict musical notes. This is nothing new in AI,…

Read Archive 2019

Archive 2019Nietzsche Responded with AI to the Question: 'What is the Meaning of Human Life?'

Yesterday, I conducted a small experiment. I took my favourite model from OpenAI, called GPT-2. Imagine a vast artificial neural network the size of a bird's brain (with over 100 million parameters) trained on millions of interesting articles written by humans...

Read Archive 2019

Archive 2019GPT-2 Interviews Alpha Industries Ltd.

I am still enchanted by the possibilities of the GPT-2 model. Today, I fine-tuned the system for questions and answers. I provided it with information about our company, Alpha Industries, and devised four questions, giving it the correct answer to three. I was curious to see how it would respond to the fourth question, and I must say, the model surprised me once again!

Read