Great news! OpenAI has finally released its largest XLGPT-2 model with 1.5 billion parameters and 48 layers.

I like that people find the outputs of GPT-2 to be very convincing. The 'credibility score' for the largest model is 6.91 out of 10. That's just slightly…

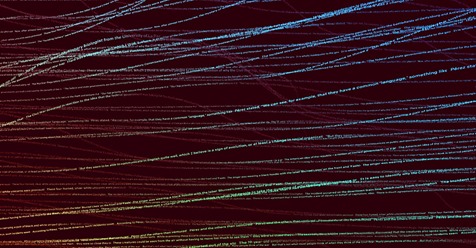

I like that people find the outputs of GPT-2 to be very convincing. For the largest model, the "credibility score" is 6.91 out of 10. This is just slightly higher than the outputs from the 774M model (6.72) and significantly more than the medium model at 355M (6.07). The difference between the large and super-large models is therefore relatively small (goodness, what a sentence :)). This was probably the final proverbial straw that led OpenAI to release the XL model.

From a pragmatic standpoint, I should add that the 774M model could no longer be trained/tuned on free Colab GPU.

Source: https://openai.com/blog/gpt-2-1-5b-release/…

Github: https://github.com/openai/gpt-2-output-dataset

Social impact: https://d4mucfpksywv.cloudfront.net/papers/GPT_2_Report.pdf

Paper: https://d4mucfpksywv.cloudfront.net/…/language_models_are_u…

Original source: wordpress