Ladies and gentlemen, this week we are witnessing one groundbreaking step after another

First, Nvidia releases the colossal Megatron (the largest model in the world, five times bigger than the largest GPT-2), to which OpenAI responds by releasing its large model with...

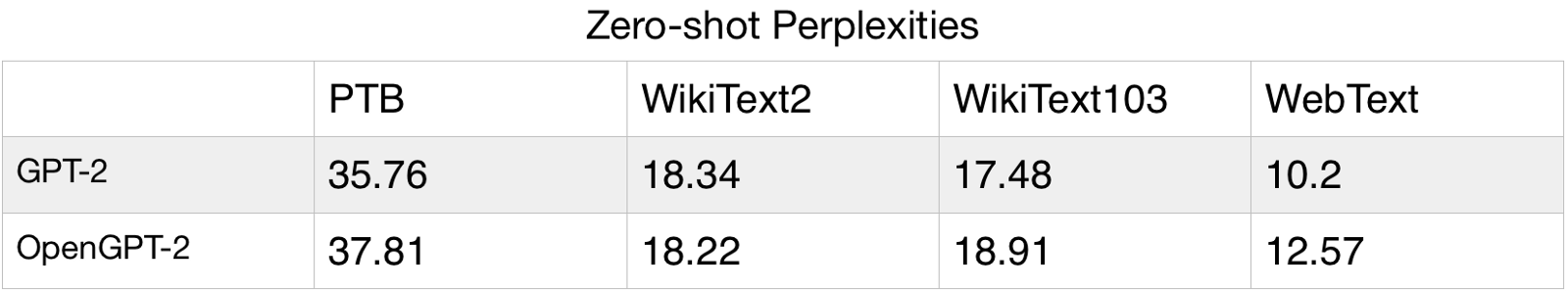

First, Nvidia releases the colossal Megatron (the largest model in the world, five times bigger than the largest GPT-2), to which OpenAI responds by releasing its large model with 800 million parameters. This move has today prompted the release of a model from the "protest" group OpenGPT-2 with 1.5 billion parameters – essentially a clone of that not-so-humble GPT-2 model. And an ordinary AI developer can only marvel and wonder which model to try first.

I asked today’s model (1.5B OpenGPT-2) to finish the sentence “Wise people should…” and how did the model continue?

“ … have compassion for the poor. If they do not, they must reckon with ignorant thinking and a poor economy.

Countries with high social spending are countries with the greatest poverty. The higher the state’s social security spending, the higher the poverty rate and the more expensive the social security system. In fact, countries with the lowest poverty rates – the lower your state's social security spending, the higher the poverty rate.”

If you want to read more about this model or try it out for yourself, take a look here: https://medium.com/…/opengpt-2-we-replicated-gpt-2-because-…

Original source: wordpress