The Latest Artificial Intelligence Model for Natural Language Processing – ALBERT!

Among the top artificial intelligence models for natural language processing (Bert, Robert, GPT-2, or Megatron), a new player ALBERT joined the ranks last week! ALBERT is brought to us by…

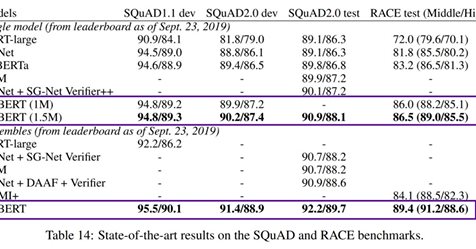

Among the top artificial intelligence models for natural language processing (Bert, Robert, GPT-2, or Megatron), a new player ALBERT joined the ranks last week! ALBERT is brought to us by Google Research and the Toyota Technological Institute. What makes this model particularly interesting is not only its fantastic results in classic tasks such as GLUE, RACE, or SQuAD, but also the fact that it is smaller than its predecessors! For instance, the old BERT x-large has approximately 1.27 billion parameters, whereas ALBERT x-large has “only” 59 million parameters.

How did the authors manage to increase accuracy while simultaneously reducing the number of “brain cells”?

There are three reasons for this:

1 — Factorized Embedding Parameterization

This refers to a more efficient use of parameters. ALBERT employs two smaller embedding layers instead of one large layer. The one-hot vector is transferred to a smaller layer with fewer dimensions.

2 — Cross Layer Parameter Sharing Layers

ALBERT further optimises the sharing of parameters (Feed Forward Network and Attention) across all layers. Simplistically, imagine that this new brain has its various centres better interconnected.

3 — The SOP (Sentence Order Prediction) Algorithm replaces NSP (Next Sentence Prediction)

The authors of RoBERTa already noticed that the NSP algorithm was not very effective. However, the authors of ALBERT have introduced their own improved algorithm, SOP. While in NSP the model learns to recognise the correct sentence by taking one from the same document and the incorrect one from a different document, SOP takes both sentences from the same document, with the correct pair in the correct order and the incorrect one in the reversed order. This allows ALBERT to avoid inadvertently predicting the topic and enables it to learn a more nuanced relationship between individual sentences.

In summary, a new set of models for text processing has emerged that is highly accurate while occupying less space.

Sources:

https://medium.com/…/meet-albert-a-new-lite-bert-from-googl…

Original source: wordpress