Blog

The Oldest AI Blog in the Czech Republic

We have been writing about artificial intelligence since 2017. 1000+ articles, thousands of pages of thoughts, experiments, and reflections. No sensationalism, no ads.

Filter articles▼

Tag Filter: Unsupervised Learning × cancel

▸Browse by Topics

Topics

Showing 24 of 24 articles

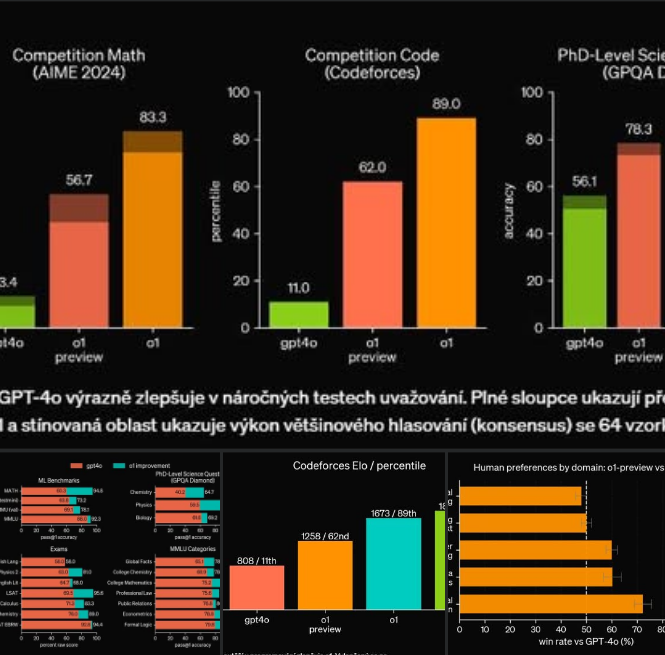

The Latest Generation of Models – o1-preview

OpenAI today unveiled o1-preview: A small revolution in artificial intelligence. I have great news for you! Introducing OpenAI o1-preview – our latest generation of artificial intelligence models,…

Read

ChatGPT – hype or a fundamental innovation?

Is ChatGPT just empty hype, or a fundamental innovation? Today, it might be a more interesting companion for 'conversation' than most people. How will ChatGPT influence education, HR…

Read

A Key Episode on Artificial Intelligence Released on the Deep Talks Podcast

In the Deep Talks podcast, hosted by Czech influencer and writer Petr Ludwig, we discussed how artificial intelligence will specifically change our world and our daily lives...

Read

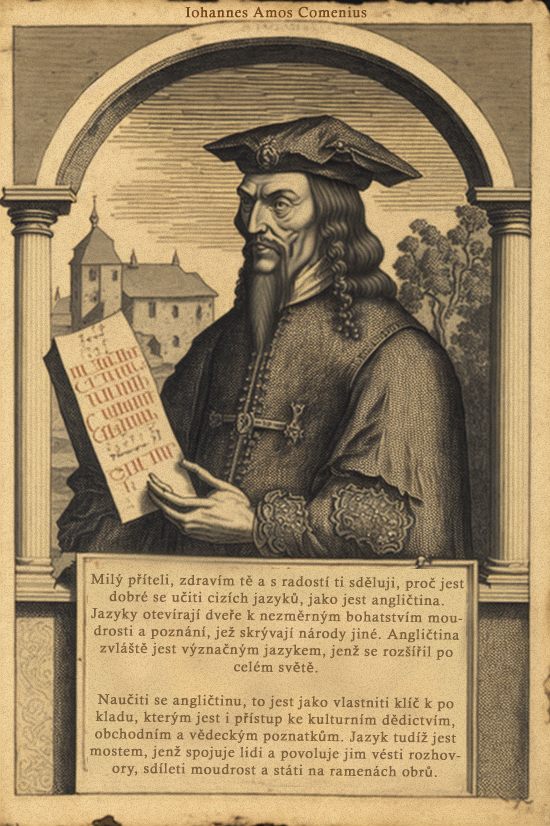

DigiKomenský – The Greatest Teacher of All Time in the Form of Jan Amos Komenský – For Teaching Children and Self-Learners

Irdies Irdies asked what digiKomenský might look like. So I thought I’d give it a try and conducted a small experiment using GPT-4, or Jan…

Read

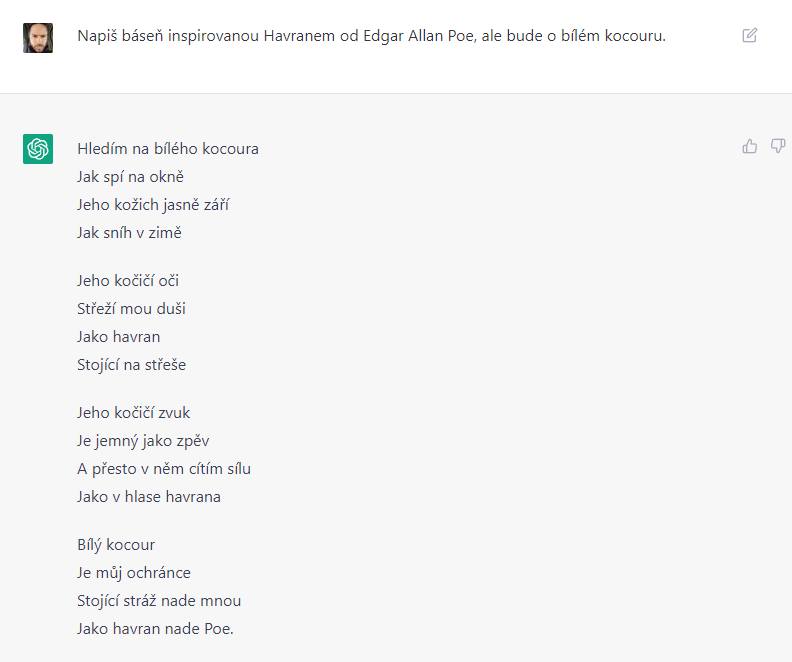

A Sample of GPT-4

One of the experiments with GPT-4. What might a poem by Edgar Allan Poe – 'The White Cat' – look like? Here is the very first attempt without…

Read

OpenAI Unveils the Latest Deep Learning – GPT-4

In just two hours, we will finally learn something we have been waiting for months! OpenAI will unveil the latest milestone in deep learning – GPT-4. This…

Read

Artificial Intelligence Conference in Education (Microsoft)

Greetings from the artificial intelligence in education conference. Today, Alenka and I presented the enhanced version of DigiHavel 2.0 to teachers, entrepreneurs, and politicians, discussing how...

Read Archive 2022

Archive 2022A Few Examples of ChatGPT

A few examples of ChatGPT. This model belongs to the GPT-3.5 family (not 3 as is often stated). Similar to InstructGPT, it is enhanced through reinforcement learning with human feedback...

Read Archive 2022

Archive 2022Google's Minerva Will Solve a Third of Practice Problems in University Mathematics, Physics, Chemistry, Economics, and Biology!

We have another exciting development for language model enthusiasts. The Minerva model is based on Google's Pathways language model, which contains 540 billion parameters. It was trained on...

Read Archive 2019

Archive 2019Andrew Ng has created a new course 'AI For Everyone' – 'AI for Everyone!'

In this classic four-week course, Ng demonstrates for the first time that AI is not just for engineers. If you want to work better with artificial intelligence in your company and…

Read Archive 2019

Archive 2019GPT-2 Interviews Alpha Industries Ltd.

I am still enchanted by the possibilities of the GPT-2 model. Today, I fine-tuned the system for questions and answers. I provided it with information about our company, Alpha Industries, and devised four questions, giving it the correct answer to three. I was curious to see how it would respond to the fourth question, and I must say, the model surprised me once again!

Read Archive 2019

Archive 2019The Global Race in Robotics: Will Europe Fall Behind the USA and China in Artificial Intelligence?

“Focusing on the human being” is the cornerstone of the European Union's approach to establishing rules for the use of artificial intelligence. However, some warn that this phrase may...

Read Archive 2019

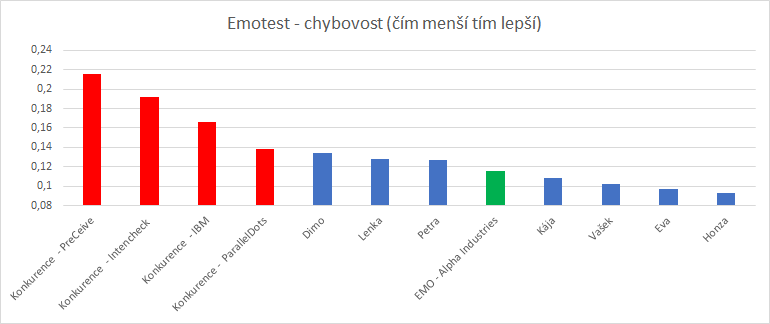

Archive 2019I bring bad news. We have improved the Czech and English emotion detector!

We have modernised the algorithms, so you can now see which words the neural network places greater weight on (the AI attention button in the bottom right). We also used much larger and more diverse datasets for training...

Read Archive 2019

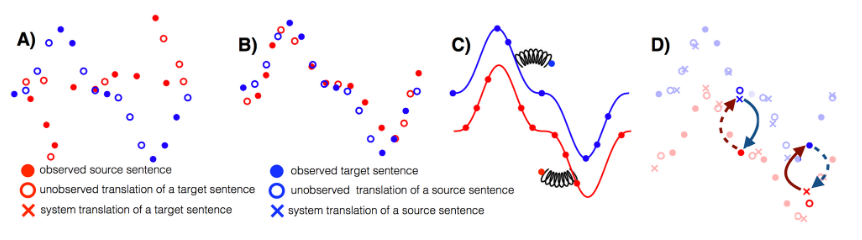

Archive 2019Unsupervised MT - Machine Learning Without a Teacher

It is still more of a concept, with relatively weak results, but I am surprised that it works at all. Let’s take a look at machine translation. Traditionally…

Read Archive 2018

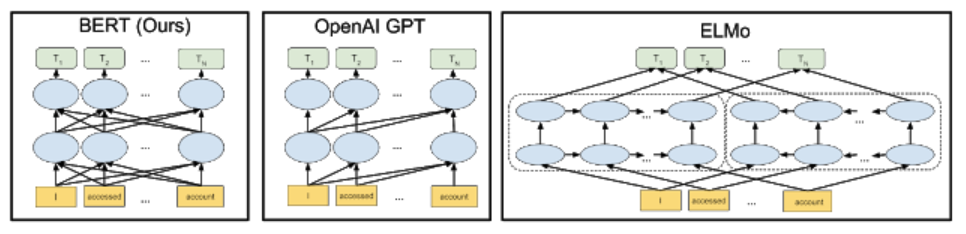

Archive 2018This Week, Google Unveiled Its Latest Technological Toy – Bidirectional Encoder Representations Transformers, or BERT

How does BERT differ from traditional NLP models like word2vec and GloVe? Word2vec and other models generate context-free word embeddings. Each word...

Read Archive 2018

Archive 2018A New Kind of AI – Augmented Random Search

Recently, a phenomenal topic has emerged among AI researchers. A new kind of AI has been invented! The algorithm is so simple that you don't need any complex framework like TensorFlow to implement it…

Read Archive 2018

Archive 2018The Conclusion of the Trilogy on Autoencoders or Genesis 2.0 – Right up to the Creation of the First Humans

In the beginning, there was chaos and noise. Epoch One. And the great Generator said: Let there be light! And there was light. And the Discriminator saw the light, that it was good…

Read Archive 2018

Archive 2018The First Part of the Trilogy on the Use of Autoencoders – Noise Reduction

This time focusing on noise reduction. First, I will contaminate the entire dataset with a large amount of colour noise. I will compress this damaged image (to 512 digits) and then decompress it again. The excess noise was…

Read Archive 2018

Archive 2018The Second Part of the Trilogy on Autoencoders – Morphing and Finding Similar Images

This time, we aim to find the most similar image in the dataset. Simply encode a face into a matrix of a few numbers. Then, you look at which matrix of encoded numbers…

Read Archive 2018

Archive 2018What is an Autoencoder and How Does it Compress Data?

I continue my study of artificial intelligence in the 'Russian school'. It's a tough but good school. It took me 10 minutes to solve the autoencoder task with an error rate of 6.7%, but it took three days of experimentation to tame the error size down to 5%.

Read Archive 2018

Archive 2018Advanced Machine Learning at the National Research University Higher School of Economics

I am currently studying advanced machine learning at the National Research University Higher School of Economics, which is a top Russian university for economics and ranks among...

Read Archive 2017

Archive 2017Google's New Intelligence Can Do a Wonderful Thing: It Raises Offspring

Google's AI raises offspring! Google's developers have introduced the AutoML (Automated Machine Learning) system, which nurtures offspring, that is, new artificial intelligences. The nurtured intelligence receives a task and then reports back to the parent intelligence on how it performed. The parent system evaluates this and creates a new, slightly modified version of the nurtured intelligence to better handle such tasks. And so it continues, over and over again, thousands and thousands of times. With this relatively simple process, AI raises a 'child network' significantly better than human developers can.

Read Archive 2017

Archive 2017Google's Artificial Intelligence Learned to Play Chess Perfectly in Four Hours

Remarkable progress! AlphaZero learned the rules of chess in four hours, after which it faced the world's highest-rated chess programme – Stockfish. What followed was the best chess in the universe…

Read Archive 2017

Archive 2017The Pentagon is Searching for Artificial Intelligence that Learns from Its Own Mistakes

I must admit that I am only familiar with self-learning AI in theory. I look forward to creating a few of my own models :) In the last three months…

Read