This Week, Google Unveiled Its Latest Technological Toy – Bidirectional Encoder Representations Transformers, or BERT

How does BERT differ from traditional NLP models like word2vec and GloVe? Word2vec and other models generate context-free word embeddings. Each word...

How does BERT differ from traditional NLP models like word2vec and GloVe? Word2vec and other models generate context-free word embeddings. Each word is expressed as a vector (for example, 300 digits that mathematically represent that word).

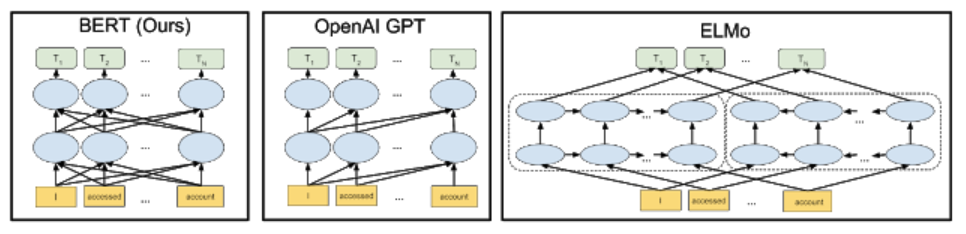

BERT is unique in that it is bidirectional. This allows it to access context from both past and future directions and without supervision, meaning it can accept data that is neither classified nor labelled by a human.

While context-free models have a single numerical representation for a word, such as "eye", BERT can distinguish between the eye in your head, the eye in soup, or a poacher's eye. BERT takes context into account.

BERT learns to model relationships between sentences through pre-training on a task that can be generated from any corpus, as noted by Devlin and Chang. It is based on the Google Transformer platform, an open-source neural network architecture that relies on a self-focus mechanism optimised for NLP.

When tested on the Stanford Question Answering Dataset (SQuAD), a reading comprehension dataset containing questions related to a set of Wikipedia articles, BERT achieved 93.2% (which is nearly 2% better than the previously best algorithms and even better than humans).

Original article: https://ai.googleblog.com/…/open-sourcing-bert-state-of-art…

GitHub: https://github.com/google-research/bert

Authors: Devlin, Jacob and Chang, Ming-Wei and Lee, Kenton and Toutanova, Kristina

Original source: wordpress