Unsupervised MT - Machine Learning Without a Teacher

It is still more of a concept, with relatively weak results, but I am surprised that it works at all. Let’s take a look at machine translation. Traditionally…

It is still more of a concept, with relatively weak results, but I am surprised that it works at all. Let’s take a look at machine translation. Traditionally, machine translation is done by having a large number of parallel sentences (for example, in Czech and English, the same sentence “Miluji tě.” = “I love you.”).

Last year, I published my results here on how I taught a computer Czech/English in 15 minutes. It was classic supervised learning. During training, I told the sieve what I considered a good translation.

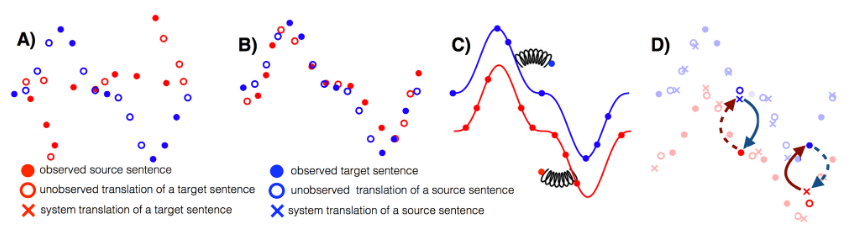

However, this approach is different. How is this approach different? You will not be feeding the computer parallel sentences, but rather two large monolingual corpora in each language. The whole trick is that within this algorithm, parallel data is automatically generated through iterative back-translation.

Sources:

https://arxiv.org/pdf/1804.07755v2.pdf

https://arxiv.org/abs/1804.07755

http://ruder.io/10-exciting-ideas-of-2018-in-nlp/…

GIT: https://github.com/facebookresearch/UnsupervisedMT

Original source: wordpress