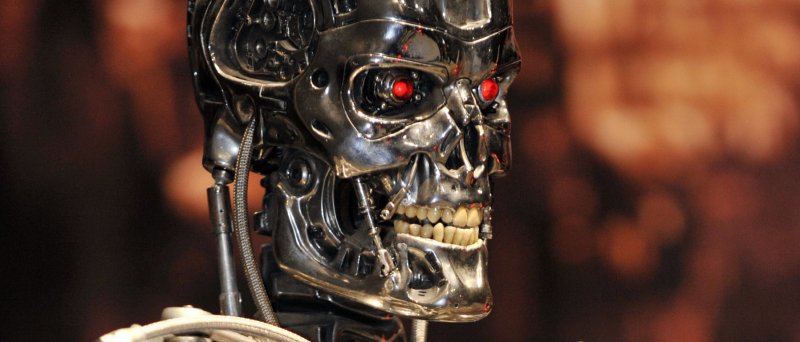

“AI can kill us! Let’s limit killer robots while we still can,” says the UN

A total of 22 countries, mostly with low military budgets and limited technological know-how, have called for a complete ban on the development of killer robots. This was discussed this year in Geneva…

A total of 22 countries, predominantly with low military budgets and limited technological know-how, have called for a complete ban on the development of killer robots. This was discussed this year in Geneva during a daily debate within the Convention on Conventional Weapons (CCW) working group. The traditional leader in the realm of killer robots is the USA. Just the facts.

By the end of the article, there is a palpable sense of technoscepticism from the author: “We are unlikely to see artificial intelligence become self-aware and think like a human anytime soon. Perhaps achieving something like that will require entirely new hardware, such as quantum computers.”

-

In my opinion, the last paragraph is somewhat disconnected from the article's theme – for a robot to kill effectively, it doesn't necessarily need to be that intelligent – for instance, a drone armed with anthrax.

-

There have already been a few attempts at self-awareness with AI – whether it's cooperative robots that must account for their own existence, robots guessing colours in logical puzzles, or even philosophising robots pondering the meaning of life, like Google's experiment with predicting movie titles.

One could counter this with the “Chinese Room” argument, suggesting that a robot does not understand what it writes. Personally, it seems logical to me that a certain degree of awareness appears in some AIs (beware, people use the term awareness for various meanings and often do not realise they shift from one to another).

Original source: wordpress