3 libraries that will help you interpret your model

A dry definition tells us that model interpretability is the ability to approve and interpret the decisions of a predictive model, allowing for transparency in the decision-making process. Simply put...

A dry definition tells us that model interpretability is the ability to approve and interpret the decisions of a predictive model, allowing for transparency in the decision-making process. Simply put, people want to be at the helm and prefer to make decisions themselves. They do not wish to blindly trust AI modules as if they were magical black boxes from which predictions fall. They want clear and comprehensible justifications for why the model made a particular decision. In short, the independent yet inscrutable artificial intelligence is gradually being replaced by understandable "augmented intelligence". Today, we will introduce three of the best libraries that can assist us with this.

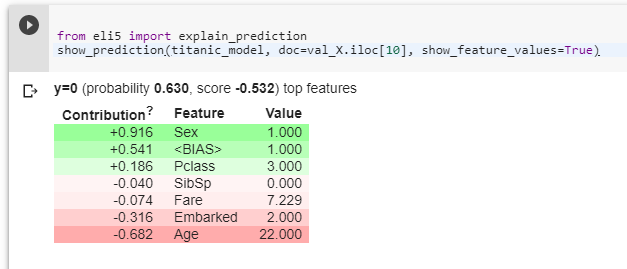

I thoroughly tested all three libraries over the weekend and got them running in Colab, so if you encounter any issues, just drop me a message. The image shows the winning Titanic model from the students of Ječná from the TMT group, enhanced by the ELI5 library. The image explains why the model "thinks" that passenger 10 from the test dataset will not survive. This is primarily due to three reasons:

- he is a man (sex=1)

- most people on the Titanic did not survive (BIAS)

- he purchased a third-class ticket (Pclass=3).

Conversely, his chances are slightly improved by the fact that:

- he is 22 years old (Age=22)

- he boarded at a good port (Embarked=2).

Now, onto the libraries:

-

LIME (Limetka)

LIME (Local Interpretable Model-agnostic Explanations) can explain the predictions of complex models in a trustworthy manner by simplifying them into an interpretable model for the specific case. Limetka is capable of explaining any model using two or more classes. Currently, LIME supports two types of inputs: tabular and textual data. -

SHAP

SHAP (SHapley Additive exPlanations)

This is a unified Python library that explains the output of any machine learning model. It combines game theory with explanation. It unifies several previous methods and presents a consistent and locally accurate feature attribution method based on expectations. -

ELI5

ELI5 allows for the visualisation and tuning of various machine learning models using a unified API. This library helps to tune machine learning classifiers and explain their predictions. It provides support for scikit, XGBoost, LightGBM, CatBoost, and more. This library also implements several algorithms for checking black-box models, such as TextExplainer, which allows for the explanation of predictions from any text classifier using the LIME algorithm, and the Permutation method, which can be used to compute feature importance and for estimating black boxes.

Sources:

Lime: https://github.com/marcotcr/lime

Shap: https://github.com/slundberg/shap

Eli 5: https://github.com/TeamHG-Memex/eli5

Freely adapted from: https://www.analyticsindiamag.com/4-python-libraries-for-g…/

Original source: wordpress